Project Overview

With the increasing use of collaborative robots in soft manufacturing, there is an ever growing need for K-12 educational technologies and frameworks that will increase the technological literacy of the next generation and better prepare young students for the jobs of the future. Robotics has not been as successful at entering the formal K-12 classroom and when it does, learning to use these technologies is challenging as the data produced by or perceived by robots remains hidden from the student and teacher alike. For example, even a relatively simple robot can have dozens of different sensors whose readings are processed by a variety of software modules that drive the robot's behavior. Thus, the inability to visualize the complex work-flows in such systems can serve as a barrier to entry to students who first encounter these technologies.

To address this lack of transparency, our project proposes to develop an Augmented and Virtual Reality toolkit and framework for robotics that will allow both teachers and students to "see the unseen" and "think like a robot" when learning to program and debug the next generation of digital tools that are increasingly present in collaborative manufacturing environments. The long term goal of our research is to integrate augmented and virtual reality interfaces in K-12 STEM education. The main objective of this proposal is to develop and evaluate a set of 5G-enabled AR and VR tools for robotics in the K-12 classroom. We hypothesize that through the visualization of data and information flow, AR and VR interfaces will provide transparency that improves student understanding of complex work-flows in the context of robotics. We further anticipate that our existing prototypes will be greatly enhanced through the use of 5G wireless technologies, enabling the transmission of high-volume data between multiple K-12 educational sites.

We will achieve our objective by pursuing the following specific aims:

- Implement 5G communication protocols for our existing AR/VR robotics prototype systems

- Deploy the proposed system in one or more local K-12 facilities

- Conduct a case study to evaluate the effectiveness and usability of the 5G-enabled system in the K-12 classroom

Overview of Current Prototypes

Following we describe three existing prototypes that we have developed for Virtual and Augmented Reality-enabled human-robot interaction.Augmented Reality for the EV3 robot:

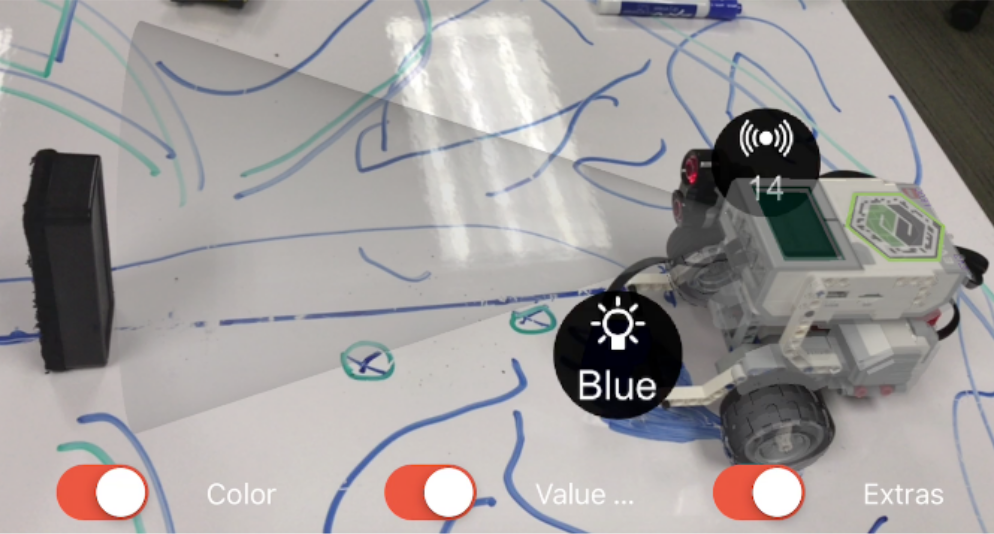

As the most common robot used in K-12 settings, the EV3 robot was the initial target for our work. The goal of the developed system is to allow a non-expert user to visualize complex data perceived by, or generated by, a robot in context, overlaid on top of the user's visual input, rather than a separate screen. The AR view shows two different types of sensor readings projected onto the physical scene: 1) the robot's sonar-based distance sensor, visualized as a cone; and 2) the robot's color sensor, visualized via text. An example AR view of the EV3 robot is seen bellow:

In the Fall of 2017, we used our prototype to run a pilot study with fourteen 8th graders in a private school just outside of Boston. The students, working in teams of 2 (with one team of 3), were asked to program an EV3 robot to navigate mazes on a mat, that were purposefully designed to confuse the robot's color and sonar sensors. Students actively used the AR application to debug their robot's behavior as they realized that in certain contexts, the robot's sensor readings cannot be assumed to be correct. Example uses of the tablet-based AR application during debugging are shown bellow:

Further details on our EV3 prototype and the results from the case study are available at:

Cheli, M., Sinapov, J., Danahy, E., Rogers, C. (2018), "Towards an Augmented Reality Framework for K-12 Robotics Education", In proceedings of the 1st International Workshop on Virtual, Augmented, and Mixed Reality for Human-Robot Interactions (VAM-HRI), Chicago, IL, Mar. 5, 2018.

Augmented Reality for ROS-enabled robots

The first prototype is able to visualize limited types of data from the EV3 robot. The system is specific to that particular robot and two types of sensory data: 1) sonar reading, and 2) color reading. To address these limitations, we are developing tools that can scale to a much larger set of data types and robots. The Turtlebot2 is substantially more complicated than the EV3; it keeps track of its own position in a map, makes motion plans to get to goals without hitting obstacles, and processes visual data in real-time. Its software architecture is also more complex, consisting of dozens of programs that talk to each other in a variety of ways. For these reasons, the Turtlebot2 is not as common as the EV3 in K-12 settings. A major goal of this research is to lower the barrier to entry with respect to complicated robots in K-12 settings.

To that end, we have developed a software framework for interfacing robots that use the Robot Operating System (the most common open-source robotics software framework) with Unity AR/VR applications that run on tablets or head-mounted displalys such as the Microsoft HoloLens. The systems scales to much larger types of sensor and cognitive robot data, including a robot's intended path, the robot's laser scan reading, its cost map indicating regions that the robot considers safe for traversal, as well as its detections of people and other objects in its environment, as shown bellow:

As the data genereated by the Turtlebor2 robot can be highly-dimensional and produced at high frame rates, we anticipate that 5G technologies will further improve the AR experience and enable transmission of such data between sites (e.g., from one school to another).

Virtual Reality control of a mobile manipulator robot

The third prototype was designed with the goal of enabling students to experience the world from the point of view of a mobile robot. The robot was built atop a custom-built omni-directional mobile base. The robot has two low-cost uArm Swift Pro robot arms and 2-degree-of-freedom head with a 3D camera. The user operates the robot using the Occulus Rift VR system which enables the user to move the robot's head and arms, as well as issue movement commands to the mobile base as seen bellow:

Our current prototype requires high volume data transfer between the robot and the Unity VR application and thus is not suitable for cases where the user is at a different site. We anticipate that 5G technologies will allow a student in one 5G-enabled school to log into a robot at a different 5G-enabled school which has the potential to greatly enhance robotics education and allow multiple schools to share the same hardware for the purposes of exposing students to robotics and virtual reality.

Videos

An example AR view of a Turtlebot2 robot autonomously navigating an office environment. The view shows the robot's intended motion path (shown in yellow blinking dots) as well as the readings from the robot's 2D laser scan sensor (shown in red):

An example AR view of a Turtlebot2 robot showing the output of the robot's person detection module:

A student controlling a mobile robot using Virtual Reality:

K12 students using augmented reality to program the EV3 robot:

The Team

Our team encompasses faculty, researchers and students at Tufts university, all of whom have extensive experience in robotics, AR/VR, or K-12 STEM education:

Jivko Sinapov

Assistant Professor

Department of Computer Science

[Academic Page] [LinkedIn Page] [CV]

Chris Rogers

Professor and Chair

Department of Mechanical Engineering

[Academic Page] [LinkedIn Page]

Jennifer Cross

Postdoctoral Associate

Tufts' Center for Engineering Education and Outreach

[Academic Page] [LinkedIn Page]

Andre Cleaver

Graduate Student

Department of Mechanical Engineering

[LinkedIn Page]

Amel Hassan

Undergraduate Student

Department of Computer Science

[LinkedIn Page]

Faizan Muhammad

Undergraduate Student

Department of Computer Science

[LinkedIn Page]

Publications

Mohammad, F., Hassan, A., Cleaver, A., Sinapov, J. (2019)Creating a Shared Reality with Robots

Submitted to late-breaking reports track of the 14th Annual ACM/IEEE International Conference on Human Robot Interaction

Cheli, M., Sinapov, J., Danahy, E., Rogers, C. (2018)

Towards an Augmented Reality Framework for K-12 Robotics Education

In proceedings of the 1st International Workshop on Virtual, Augmented, and Mixed Reality for Human-Robot Interactions (VAM-HRI), Chicago, IL, Mar. 5, 2018.

In the Media

Hands-on Research for Undergraduates: From robots to bee pollen, Summer Scholars are learning the value of research firsthand with Tufts facultyIn Tufts Now, Published Jul. 25th, 2018.

Sponshorship

Our existing work at the intersection of AR/VR and Robotics is funded by: